Time is our measure of a constant beat. We use seconds, minutes, hours, days, weeks, months, years, decades, centuries, etc. But what if we measured time against rituals, chores, tasks, stories, and narratives? How can we use our memory, prediction, familiar and unfamiliar narratives to tell time?

As a child, I remember using the length of songs as a way to measure how much time was left during a trip. A song was an appropriate period to easily multiply to get a grasp of any larger measure like the time left until we arrived to our grandmother’s place. The length of a song was also a measure I could digest and understand in an instant.

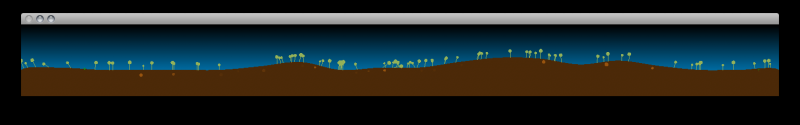

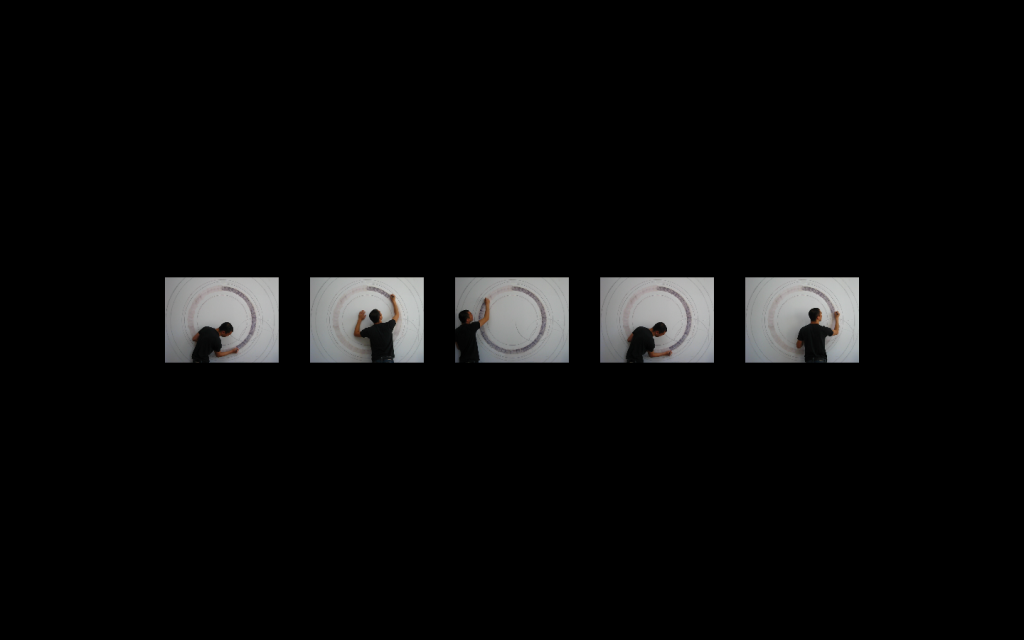

The first iteration of Cinematic Timepiece consists of 5 video loops playing at 5 different speeds on a single screen. The video is of a person coloring in a large circle on a wall.

The frame furthest to the right is a video loop that completes a cycle in one minute. The video to the left of the minute loop completes its cycle in one hour. The next completes in a day, then a month, then a year.

Through various iterations, we intend to experiment with various narratives and rituals captured in a video loop to be read as measures of time.

The software was written in OpenFrameworks for a single screen to be expanded in the future for multiple screens as a piece of hardware.

Cinematic Timepiece is being developed in collaboration with Taylor Levy.

Download the fullscreen app version [http://drop.io/cinematicTimepiece#]